- 25/10/2017

- Posted by: Millon Unika

- Category: All, Business, Digital Marketing, SEO / Social Media, Web Design

Common SEO Mistakes We Must Avoid for Better Raking

In present scenario SEO is the key point for any successful business. Its the most effective way to generate new leads & customers. SEO strategies has evolved with time with various techniques. To build a great SEO strategy we should explore every opportunities & techniques of it & must avoid mistakes which can harm our ranking. Its a vast subject & without proper focus & expertise it difficult to get desired results. Also we make simple common mistakes which can cause adverse results for our website ranking. Here we will discuss about Common SEO Mistakes We Must Avoid for Better Raking & also how to overcome these common mistakes.

You might also like: Top Keyword Research Tools for SEO 2017

1. Keyword Gaps

Keyword Gaps are one of the most common mistakes we make which can harm our SEO result. Online Marketing Strategies can be affected by overlooking this simple mistake and it’s possible that we cannot capitalize some great marketing opportunities.

These Gaps are basically different target keywords for your SEO ranking. You need to get rid of the issues fast; otherwise your ranking will go down in respect to your competitors over the time. Therefore you need to know which keywords you are missing & how to fill the Gaps in your keywords & contents.

How to Avoid the Mistake

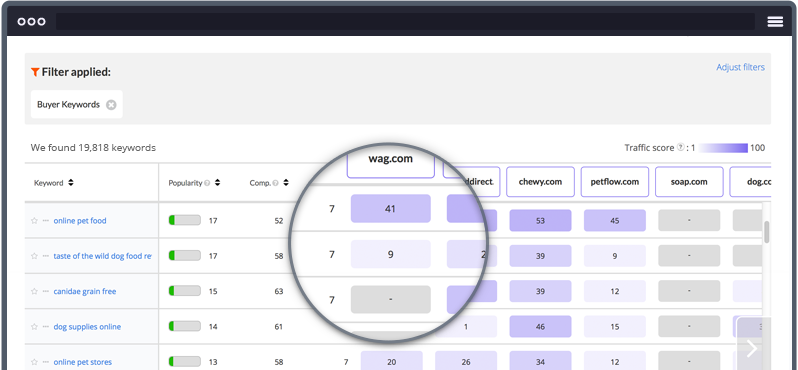

There are many great tools available online to do your perfect keyword research & analysis. You can use online tools such as, SEMrush, GoogleKeywordPlanner, Ahrefs etc. to find the gaps in your content and keyword strategies & also can perform competitor keyword analysis. This tools will give you the whole report of your keyword strategy, high value keywords for your business, your competitors well performing keywords which you can implement in your strategy for better SEO results and ranking.

2. Inconsistent URL Format

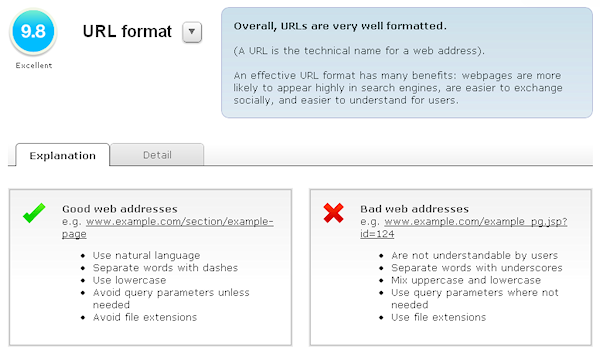

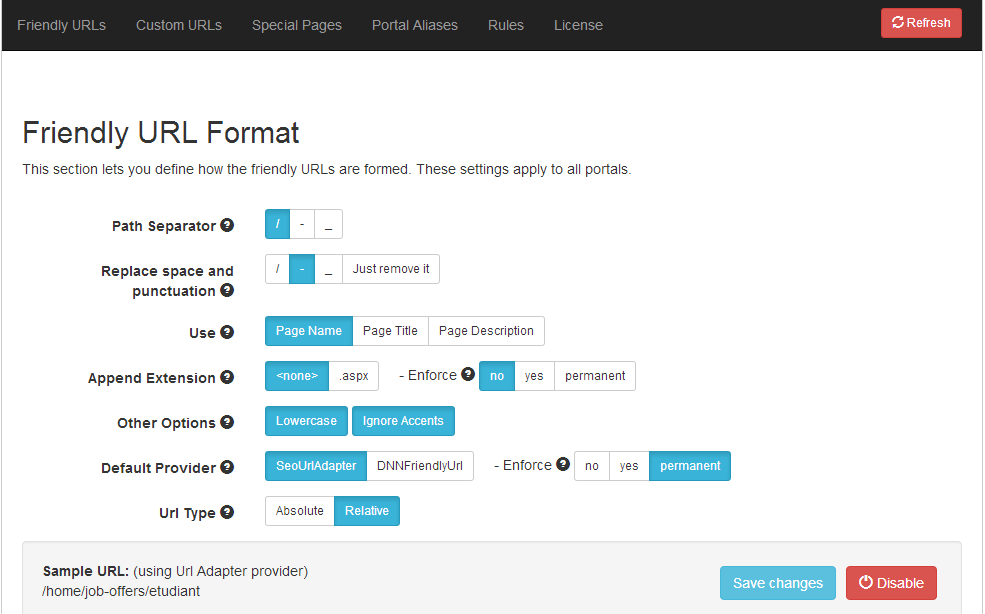

Proper & consistent URL format is one of the most important parts of your SEO strategy. Search engines scroll your pages with URL & with your URL formatting it will know lot about your website. Not creating consistent & SEO friendly URL formats are the most common mistakes we do, which perhaps damage our SEO results. Search Engines may get wrong impression of your site from the inconsistent & non-SEO friendly URL format.

Say suppose your webpage link has two different URL formats like https://www.xyz.com & xyz.com then the search engine algorithm will treat this as two different websites & thus naturally the content plagiarism scenario will occur for your site. Also it will not recognize which one is the original site, resulting penalty for your site. Your search engine indexing, keywords & backlinks will also get problems with this wrong URL formatting. This simple mistake can unfortunately destroy your whole SEO strategy. Therefore always give extra focus while formatting your webpage URLs.

How to Avoid the Mistake

There are so many ways to avoid this above scenario. But the simplest & convenient way is to stick to a fixed way for a consistent URL formatting. Therefore either go for the https://www.xyz.com format or chose www.xyz.com format and strictly maintain this throughout your whole WebPages’ URL formatting. Both formats will give you the same potential, but the catch line is you need to stick to a certain format & maintain it for all of your pages.

3. 301 & 302 Redirects

301 & 302 Redirects are also the most common mistake we make which harms our SEO ranking big time. Many professionals also do this mistake often. You need to set your redirects properly for your webpages. Basically 310 Redirect is the permanent link of your newly redesigned page against your old page, whereas 302 Redirect is the temporary link. When you redesign your old webpage or move content to a newly designed page then the search engine treat the page as a new one with no indexing history. Also the search engine cannot link the old page & will treat this as vanished page. It will affect the page ranking in search result & your website traffic will drop drastically.

How to Avoid the Mistake

You need to fix 301 & 302 Redirect issue to avoid your website traffic drop. Always set 301 Redirect for all your newly designed pages, so that search engines can relate it with your old indexed page. Avoid setting 302 redirect for your old pages.

4. Not Focusing on Quality & Relevant Content

Content is the key for capitalizing online marketing opportunities. You can have great SEO results with better ranking visibility with unique, relevant & high quality contents. Unfortunately most of the time, we do not focus much on the content & miss this important part to include in our SEO strategy. Using duplicate or plagiarized content will affect your website ranking negatively & you may be penalized by search engines for this. Also irrelevant contents in your landing pages can lead to your visitor abandoning your page, resulting increase in your page bounce rate & loss of customer leads.

How to Overcome

Unique content with relevance to your website & with valued keywords can give your website SEO result a high mileage. You must avoid any duplicate content. Always draft your webpage content as call to action with relevant contents to avoid page bounce rate. A high quality relevant content with information & inbound links are always helpful to your visiting traffic. It will certainly help to increase your website traffic rate.

5. Misusing robots.txt, Useragent, and Disallow

This is the easiest & most commonly found mistake for any website or any page but with a massive impact of downgrading your page ranking. It also has huge negative effect in the search engine indexing.

For any website, robots.txt is the most important file which actually guides search engines while crawling any website. It basically guides the search engine that, which page or pages it should crawl while entering any website. With robot.txt you can block pages which you don’t want to be crawled by the search engine. You do not need a robot.txt for your website for unrestricted crawling. But for blocking certain pages the syntax is: “Useragent:” followed by the bot’s name and “Disallow:” followed by the pages that the bot wouldn’t crawl.

You need to be very careful while implementing this. For wrong syntax search engine will not crawl any of your pages and simply go back when they find a wrong syntax. This will cause damage to your ranking. For example: for syntax “Useragent: *” and “Disallow: /,” search engine will not crawl your whole website & abandon it.

You need to be very proficient with this syntax as these are bit confusing. It’s better to hire a professional for this job. Its better to try with dummy pages at first.

You might also like: How to Fix Website Traffic Drops after Website Redesign

Thanks for the great tips! I’m new to online marketing, and this is really helpful! Since getting started, I’ve been bombarded by “spin writers” and such to create a TON of content quickly, but you seem to say that these search engines have become sophisticated enough to determine when your content is crap. Am I understanding that right?